How Your 2025 Wrapped Highlights Are Generated: A Tech Deep Dive

Introduction

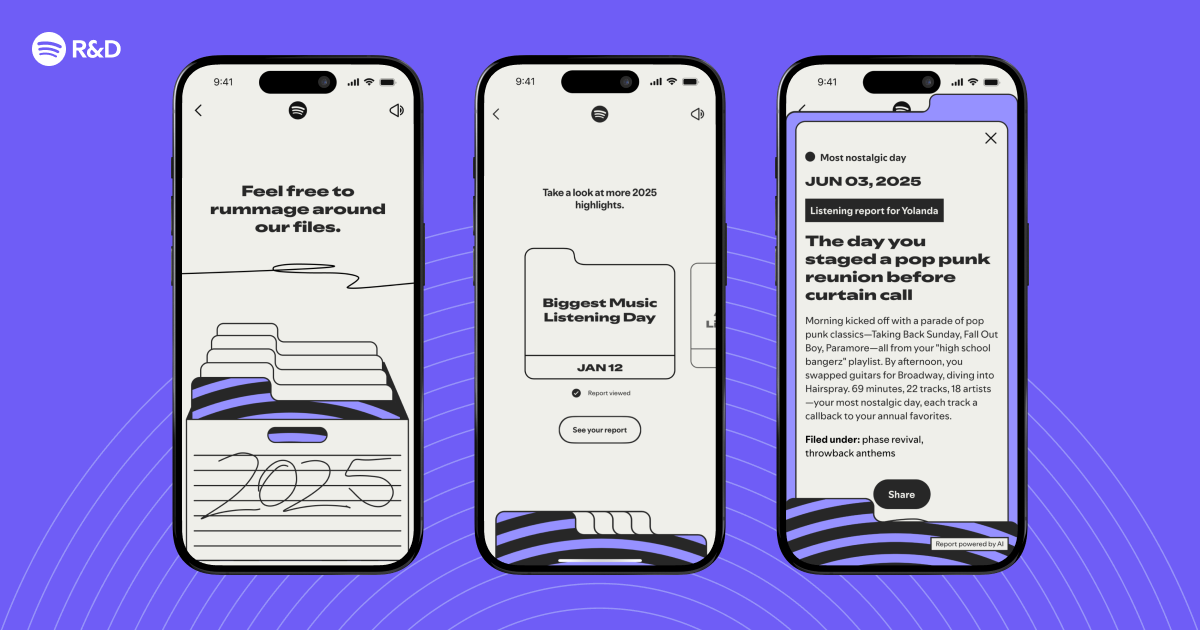

Every December, millions of Spotify users eagerly await their Wrapped experience—a personalized recap of their year in music. But have you ever wondered what happens behind the scenes to transform raw listening data into those delightful story cards and quirky insights? At Spotify Engineering, we asked ourselves: “What if we could identify interesting listening moments from your year, and tell you a story about them?” This guide takes you on a technical journey, step by step, revealing the algorithms, data pipelines, and creative engineering that power your 2025 Wrapped highlights. Whether you're a curious listener or an aspiring data scientist, you'll gain a deeper appreciation for the magic behind the music.

What You Need

- Basic familiarity with data concepts (e.g., data points, aggregation, machine learning)

- An interest in personalization and recommender systems

- Optional: Access to Spotify’s engineering blog or public talks (for further reading)

- A curious mindset—no coding required!

Step-by-Step Guide

Step 1: Collect and Clean the Listening Data

Every interaction you have with Spotify—playing a song, skipping a track, adding to a playlist, or even pausing—is logged as an event. For Wrapped, we aggregate these events over the entire year. The raw data includes timestamps, track IDs, device types, and context (e.g., from a playlist, radio, or search). Data cleaning removes duplicates, fixes timestamps, and handles missing metadata. This massive dataset—terabytes in size—forms the foundation of your personal narrative.

Step 2: Extract Meaningful Features

Not every play is equally interesting. To identify “highlight moments,” we create features from the raw logs. Examples include:

- Late-night listening sessions: clustering plays between midnight and 5 AM.

- Genre diversity spikes: days when you jumped from classical to hip-hop to indie.

- Repeat binges: songs you played more than 10 times in a week.

- Mood-based changes: shifts from upbeat to mellow tracks during certain months.

We also compute engagement metrics like skip rates, completion percentages, and playlist additions. These features are stored in a structured format ready for analysis.

Step 3: Apply Machine Learning to Rank Moments

With thousands of potential moments per user, we need to pick the most meaningful ones. A combination of unsupervised and supervised learning models does the trick. For example:

- Anomaly detection identifies outliers (e.g., a sudden spike in listening during finals week).

- Clustering groups similar listening patterns (e.g., “Your 3 AM study sessions”).

- Sentiment analysis on song lyrics or audio features (valence, energy) determines emotional arcs.

A ranking model scores each candidate moment based on uniqueness, user engagement, and narrative potential. Only the top 10–15 moments make the final cut.

Step 4: Build the Narrative with Templates

Now we transform data points into stories. We have a library of template narratives for different moment types: “Your Song of the Summer,” “The Artist You Discovered,” “Your Genre Shift.” Each template includes slots for dynamic text (song names, percentages, dates). Natural Language Generation (NLG) fills these slots with personalized numbers and descriptors. For instance: “You listened to ‘Blinding Lights’ 47 times—that’s enough to drive from New York to Chicago without skipping!” Visual assets (colors, animations) are also selected based on your top genres or moods.

Step 5: Render the Experience Across Platforms

The final step is delivering the Wrapped story to your device. The backend generates a lightweight JSON payload with all narrative components. On mobile apps, a custom rendering engine reads this JSON and assembles the interactive story cards—slide by slide, with parallax effects and sound snippets. On the web version, similar logic runs in JavaScript. Performance is critical: the entire experience must load in under three seconds, even on slower connections. Caching and edge delivery ensure global readiness on launch day.

Step 6: Testing and Quality Assurance

Before going live, we run A/B tests on thousands of simulated user profiles. We check for:

- Accuracy: Are the highlighted moments truly representative of the user’s year?

- Diversity: Do narratives cover different facets (top artists, genres, moods)?

- Privacy: No personally identifiable information is exposed in the shareable format.

We also monitor feedback loops—if users consistently skip a certain narrative, we adjust the ranking model. Continuous iteration makes each year’s Wrapped better than the last.

Tips for Engineers and Curious Listeners

- For engineers: Consider using feature stores to manage reusable listening metrics across projects. Data pipelines should be idempotent to handle reprocessing without side effects.

- For listeners: Appreciate that every skip and replay shapes your Wrapped. The more diverse your listening, the richer your story becomes!

- General tip: Privacy is paramount. All Wrapped data is anonymized before being used for internal analysis—your personal highlights are never sold or shared without consent.

- Pro tip: If you want to influence next year’s Wrapped, actively explore new genres and create themed playlists. The algorithms love patterns!

Related Articles

- Mastering Prime Video's New Clips Feed: A Complete User Guide

- Spotify Reveals Cutting-Edge Tech Powering 2025 Wrapped: How AI Spots Your Year’s Most Meaningful Listening Moments

- How to Spot Verified Artists on Spotify: A Step-by-Step Guide

- 10 Critical Updates on Spotify’s Google Cast Device Disappearance

- Peacock Surges Past Netflix as Ultimate Comfort Streaming Hub, New Data Reveals

- Building with AI Agents: A Practical Guide Inspired by Spotify and Anthropic

- Agentic Development Redefined: A Deep Dive with Spotify and Anthropic

- Top Apple Bargains This Week: Massive Savings on Watch Series 11, MacBook Air, and AirPods